How Google’s Nano Banana Pro Is Killing the Era of ‘Leaks’

Article

Google just dropped a bombshell in the world of generative AI — and it’s not a new chatbot. Their latest image-generation system, Nano Banana Pro, is so photorealistic that it’s effectively killing the concept of “leaks” as we know them.

A New Chapter for Fake Leaks

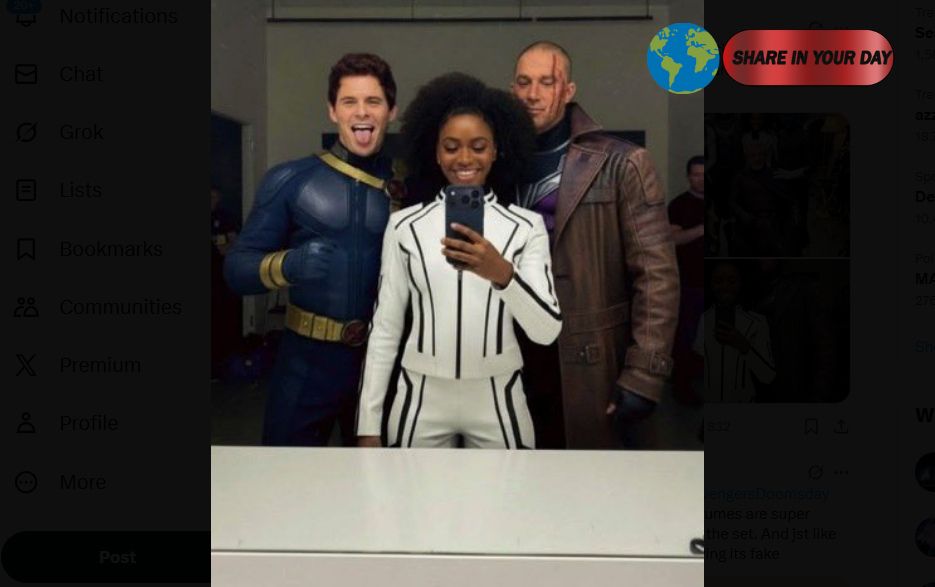

In recent days, social media has been flooded with what look like set photos and promotional stills for major upcoming franchises: Avengers: Doomsday, The Boys Season 5, even Fortnite Chapter 7. But here’s the catch — many of them are almost certainly AI-generated. Forbes+1

For decades, “leaks” meant blurry paparazzi shot on a film set, early builds of video games, or insider images shared by someone in the know. Even fake leaks required effort — Photoshop skills, poorly taken snapshots, or physical leaks on set. Now? Nano Banana Pro is changing the game. Forbes

Reality vs. AI: Blurred Lines

Paul Tassi, the author of the Forbes piece, admits that even as someone who’s spent a year parsing AI from real images, he was nearly fooled. He ran his own experiment: in just four minutes, he used three simple prompts and generated a convincing image of Hugh Jackman’s Wolverine in front of a Doomsday-green screen. Forbes

That’s not hyperbole — the technology is that good. And the impact is already tangible: real leaks are being called fake, and fake ones are being treated like gospel.

Why Studios Might Secretly Be Cheering

From a studio’s perspective, this shift could be a dream. Authentic leaks — whether from sets or early game builds — have long been a headache. They spoil reveals, give away major plot or design points, and undercut marketing plans. But if every “leak” can be written off as AI? Suddenly, controlling the narrative becomes easier. As Tassi puts it: “Studios must be loving this.” Forbes

The Bigger Picture: AI Reality Is Unsettling

This isn’t just about leaks. We’re entering a new era where trust itself is under threat. AI-generated images are no longer cartoonish or obviously fake — they’re nearly indistinguishable from real ones.

That creates several troubling dynamics:

- Disinformation risk: If anyone can make believable images, it’s going to be harder than ever to tell what’s real.

- Credibility crisis: Even whistleblowers and insiders may be dismissed because “it’s just AI.”

- Verification burden: As Tassi notes, people will need to scrutinize every image five, ten times — or just give up. Forbes

One particularly unsettling detail: according to Tassi, Google has imposed far fewer guardrails on Nano Banana Pro than other AI systems — including for public figures. Forbes That raises longer-term concerns about deepfakes, AI impersonation, and misuse.

What This Means for the Future

- For consumers: Be skeptical. Treat every “leak” with a grain of salt.

- For creators and studios: This is a double-edged sword. AI can help control narratives — but it also erodes trust.

- For regulators: The pace of AI is accelerating. We may soon need frameworks for verifying authenticity or labeling synthetic media.

Final Thought

Google’s Nano Banana Pro didn’t just level up AI-generated art — it blew a hole through the entire “leak” ecosystem. The fallout is just beginning. If every image can be faked, the very idea of a credible leak might vanish. And that’s a game-changer for Hollywood, gaming, fans — and truth itself.